April 2026 AI links

(continues March 2026 AI links)

1iv26

"Conviction Collapse" and the End of Software as We Know It O'Reilly

...AI is not just a tool. It is a substrate that we shape. It's a medium, like clay or marble or bronze for a sculptor, or words for a writer. Everybody had access to the same capabilities of English as Shakespeare, but Shakespeare made something out of them that nobody else did. Creating a software product is increasingly like creating a document or an image or a piece of music. And that means that it can range from something throwaway to an enduring work of art....Harper and I both worry about the same thing: So much of Silicon Valley right now is making affordances for capital to win. What are the affordances that would help humans to win? Harper frames it as short-term versus long-term capitalism. I think about it in terms of mechanism design, the structures and incentives that shape what outcomes are even possible.

Google AI announcements from March google.com

Introducing MolmoWeb—An open agent for the web Ai2

The web is the world's largest software platform. Agents that can navigate it reliably could dramatically expand access to information and digital services. But most web agents are closed, and the ones that work well have been historically built on proprietary models.MolmoWeb works by looking at the same screen you do: given a task and a live webpage, it views the screenshot, decides what to do next, and takes action. Because it operates on screenshots rather than underlying page code, it won't break when a website changes its HTML.

2iv26

Is this the definitive AI algorithm? Ignacio de Gregorio at Medium

... imitation only takes you so far. It's reasonably good at compressing knowledge; the model does learn a lot of facts, but it's not great for problem-solving, in the same way that dictation may help teach kids to write, but won't take you very far in teaching them maths.For problem-solving tasks, we need exploration. We need the model to trial-and-error its way into the answer. It needs to explore ways to solve the task to truly grasp the underlying concepts, just as kids only truly learn maths when they actually try the problem by themselves.

And leaves us to the other big training method: exploration.

The "Street-Smart" Revolution: LeCun and Malik's Manifesto for Autonomous AI evoailabs

...The AI world is currently split. On one side, we have the "Book-Smart" giants — Large Language Models (LLMs) that have read the entire internet but can't tie a shoelace. On the other, we have the "Street-Smart" aspirants — robotic systems that can move but lack the general reasoning to navigate a messy kitchen....Community Reactions: Hype vs. Skepticism

The community response across Reddit (r/singularity) and Hacker News has been electric but cautious:

- The "World Model" Believers: Many agree that LLMs have hit a wall. One popular sentiment on Reddit notes: "We've spent 5 years scaling words.' It's time to scale 'reality.' This paper provides the first credible blueprint for how to do that."

- The Deployment Skeptics: In the r/SelfDrivingCars community, users are more grounded. Some argue that while the "System M" architecture is theoretically sound, the "sim-to-real" gap remains a massive hurdle that a 40-page paper can't solve overnight.

- The Funding Buzz: The fact that NVIDIA and Bezos are reportedly backing the "AMI" philosophy suggests that the "smart money" is moving away from purely linguistic models toward systems that can interact with the physical world.

Amazon Just Proved AI Ain't The Answer YET AGAIN Will Lockett at Medium

Nvidia CEO and professional AI glazer Jensen Huang recently claimed that we have already achieved AGI (Artificial General Intelligence). Firstly, that raises serious concerns about his definition of intelligence. Current AI systems are more akin to a deeply hallucinating, plagiaristic sycophant than to any form of coherent intelligence....Take Amazon, for example. For the third time, they have learned the painful lesson that generative AI is not intelligent, can't replace human intelligence, and isn't a productivity tool.

...Earlier this month, the Financial Times reported that Bezos's favourite little monopoly had effectively called a giant emergency meeting of its remaining engineers to try and fix the rapidly increasing number of outages taking Amazon.com down.

...According to the official line, generative AI was a "contributing factor" in the botched "software code development" that caused these outages. But that is a bit like saying the untimely death of Archduke Franz Ferdinand was a contributing factor to World War I. This reeks of a PR spin designed to hide the embarrassment of the own goal that is Amazon's AI "transition", particularly when you consider the actual problems causing these outages, the engineers' solutions to prevent them, and the wider context of Amazon's recent business decisions. It all points to AI being the culprit.

...Last month, the Financial Times reported that Amazon's own "agentic" Kiro AI coding tool was to blame. Engineers had allowed Kiro to make changes to Amazon's AWS code and make "autonomous decisions". As it turns out, Kiro ain't that clever; it pulled a Musk move and deleted the entire working code environment before recreating it from the ground up with a ton of fatal bugs.

...it seems to be both wild "agentic" AI and AI slop coding that are the culprits behind Amazon's outages, and the smoking gun is the emergency solution these engineers came up with. Are you ready? Their solution is to require junior and mid-level engineers to ask senior engineers to sign off on any AI-assisted changes. This is almost fully admitting that AI caused all these outages.

But why are these engineers using AI like this? After all, 96% of professional coders explicitly don't trust AI-generated code. These guys know giving it the keys to the kingdom was a bad idea.

Well, they were basically forced to.

Amazon has laid off thousands of engineers and plans to soon lay off around 30,000 workers, all while their major services, like AWS, expand dramatically. These services simply can't be run on a skeleton crew, which makes this an obvious attempt to replace workers with AI automation. Indeed, last year, while these layoffs were happening, leaked documents showed Amazon's plans to replace 75% of its workforce with automation and AI.

In short, these engineers are likely so stretched that they are forced to turn to AI to speed up their output. On top of that, Amazon recently mandated that 80% of its engineers use Kiro at least once a week. This isn't necessarily a problem, but because they are so stretched, they don't have the time to check the AI's outputs, which practically guarantees these fatal mistakes will happen over and over again.

...once again, Amazon has also proved AI isn't a productivity tool either.

It completely kills productivity for these engineers to ask junior and mid-level engineers to obtain senior engineers' approval for AI-assisted changes. Amazon engineers are expected to use AI coding tools like Kiro. So this means almost every line of code now has to be reviewed and approved by a senior engineer. Being a jumped-up debugger is not part of a senior engineer's job description! This is a huge bottleneck for junior and mid-level coders, who are already far too understaffed, and it burdens senior engineers with heavier workloads and scope bloat, which detracts from their main responsibility of ensuring the entire project actually functions on a wider scale. In other words, this AI was implemented to make these departments more productive, but that decision led to a steep and damaging decline in quality. So, Amazon's solution is to make these teams far less productive from top to bottom through enforced micromanagement.

The companies that win with AI won't look like companies at all Enrique Dans at Medium

For the past two years, the dominant corporate conversation around AI has been painfully predictable. Executives talk about productivity, copilots, efficiency gains, and cost savings. Boards demand AI road maps. Consultants package urgency into slides. Entire organizations scramble to prove that they are "doing something with AI."But beneath all that noise lies a much bigger shift, one that many companies still seem determined not to see: AI is not simply a tool for making organizations more efficient. It is a technology that changes the minimum viable size of an organization.

And once that happens, many of the assumptions that defined the modern company begin to look far less stable than they used to.

...For more than a century, scale meant headcount. If you wanted to do more, you hired more people. If you wanted to grow, you added layers: more analysts, more managers, more coordinators, more specialized roles, more internal reporting, more process. The modern corporation was built around one simple assumption: complexity requires humans, and humans require structure.

That assumption is now under pressure. A single person equipped with the right AI tools can already do work that, not long ago, required a small team. Research, drafting, coding, analysis, translation, design exploration, synthesis, customer support, prototyping: none of these functions disappear, but many of them are increasingly being compressed.

...In the traditional company, value came from coordinating large groups of people. In the AI-enabled company, value increasingly comes from designing systems in which a relatively small number of humans coordinate workflows, agents, models, data sources, and decision processes.

That is a very different skill. It is less about supervising labor and more about architecting capability.

...The wrong question is this: How can AI make our current company more efficient?

The right question is much more uncomfortable: If we were building this company today, in a world where AI already exists, would we build it like this at all?

In many cases, the answer is obviously no. We would not build so many handoffs. We would not create so many reporting layers. We would not separate functions in the same way. We would not assume that every form of growth requires proportional hiring. We would not define professionalism by the ability to navigate internal complexity. And yet, that is exactly what many AI strategies are trying to preserve.

This is why so many corporate AI initiatives feel underwhelming. They are designed not to rethink the company, but to protect it from rethinking itself. They use a transformative technology in the most conservative way possible.

...Economists have long described technologies such as electricity, steam engines, and computers as general-purpose technologies: innovations that reshape entire economic systems rather than individual industries. Artificial intelligence increasingly appears to belong to that category.

The internet reduced the cost of publishing, and media was transformed. Suddenly, individuals and very small teams could do things that once required entire institutions. AI is beginning to do something similar to organizations more broadly.

We are entering an era in which small teams will be able to generate outputs, speed, and market impact that once required far larger companies. Not because humans have become superhuman, but because leverage has changed. Researchers studying innovation dynamics have long observed that small teams tend to produce more disruptive breakthroughs, while large teams focus more on developing existing ideas.

...The real divide in the AI economy will not be between companies that use AI and companies that do not. That distinction is already becoming meaningless.

The real divide will be between companies that use AI to reinforce old structures and companies that use it to redesign themselves around a new logic of leverage. One group will get incremental gains. The other will redefine what a company can be.

That is why the most successful organizations of the next decade may not look like the successful organizations of the last one. They may have fewer employees, fewer layers, fewer silos, and fewer rituals inherited from an industrial logic that no longer fits.

They may look, from the outside, almost unnervingly small for what they are capable of doing. And that is the point.

The companies that win with AI won't simply use new tools: they will abandon old assumptions. And once they do, they may not look like companies at all.

How is this even legal? Ignacio de Gregorio

...I've come to view the AI industry as fast food: tastes incredible, but you really, really don't want to see what's inside.I'm an avid AI user; it's incredible (when used right). But its immense cash intensity is forcing the incumbents' hand into practices I believe will soon be illegal.

...The truth is, much of the revenue this industry claims is actually "accounting revenue," which, in this case, changes things significantly.

...While AI requires huge amounts of spending, closing in on the one trillion per year threshold, the revenues are nowhere near that.

Thus, in the absence of revenues, incumbents are getting imaginative. But how much? Well, a lot.

The idea is to create a circle of fictitious money supposedly flowing between companies, when in reality, most of it is not real. However, they are still recognized in accounting terms (i.e., they are recorded as revenue on the company's income statement).

This is like sending yourself money from another of your accounts and calling it new revenue. Sounds like I've gone crazy, but this is literally what is going on.

How this is legal is just beyond me. They are literally taking their own money and recognizing it as revenue.

AI Through the Lens of Sci-Fi Prophecy: Why the Supercomputer HAL 9000 Intended to Kill Humans srgg6701 at Medium

...Some time after the flight began, HAL's behavior began to show oddities that did not escape the astronauts' attention. After consulting, they decided to shut down the computer's higher cognitive modules, fearing that if they did not, the mission's success and their lives would be in danger.However, as it turned out, HAL feared the same thing. It anticipated their intentions and struck first.

...It turns out that the cause of the tragedy was not AI malevolence, but an internal conflict in its logic module. The fundamental directive embedded in HAL 9000's consciousness was to always tell the astronauts the truth. But besides this, there were two more directives. First, to do everything possible to complete the mission, and second, not to tell Bowman and Poole about the mission's true purpose until the ship reached the end of its journey.

So, it was the logical contradiction between the directive to always tell the truth and to withhold information that led to anomalies in HAL's situation analysis. Eventually, it concluded that the problem lay in the humans, which jeopardized the entire mission. Based on this logic, it decided to eliminate everyone who could interfere with its execution.

New Words for a New Industry ftrain.com

...AI is blurring—and even destroying—the distinctions between disciplines. Do we need a new way to talk about work? On this week's episode, Paul tests out a few of his AI-era neologisms on a skeptical Rich: Perhaps you are a "custolient," looking to purchase the services of a "praygency" for your next project? (Yes, Paul insists the "y" in "praygency" is vital.) Are these new blended terms helpful, or just a way of talking around a very uncertain landscape?

3iv26

Higher education must bridge the AI gap Marie Lynn Miranda Science editorial

AI will no doubt reshape work across broad sectors of the global economy. What distinguishes this moment is not disruption alone, but the pace, scale, and portability of the technology itself. The technology is diffusing faster and more broadly than previous innovations, compressing the time that institutions have to respond. AI's unprecedented speed and scale create urgency around deliberately shaping its distribution. Society faces risks, but also a (narrow) window of opportunity to shape outcomes in a way that benefits everyone.As society adapts to AI, it has a chance to do better than with past revolutions. The greater risk is not that AI will eliminate jobs, but that its benefits will once again accrue unevenly. Here, higher education has an opportunity to get this right, ensuring that AI creates broad advantages across all divides—race, income, geography—that characterize the human experience.

Whatever Happens to Music Will Happen to AI Bruce Sterling at Medium

...this is the high summer of AI. It's even a global-warming heat-wave of AI. AI has never moved this fast or been this powerful. It truly feels like science fiction come to life. It even feels like metaphysics turned into products and services.It's technically complex and also moving very fast, so it's hard to find the proper words to describe what is going on and to place it in perspective. Even AI chatbots can't generate words to describe it.

...People always have music. However, there are certain musical periods where people get carried away. Such as the birth of ballet and opera in Italy. Also, jazz of the Jazz Age, and rock and roll of the 1960s. These are musical vogues in which music seems to restructure society. People take the new music to heart — not because they understand the music, but because they DON'T understand the music. They've never heard music with such novelty and intensity. It's music with a strange new potential. Often it feels threatening and alien in some ways, like a sudden soundtrack of destruction for older traditions and values. Of course that scary aspect makes the music even more exciting.

Music has had cultural revolutions, but it's also had intense technical revolutions. Often musicians are horrified by technical transitions in their way of life. When recorded music first appeared in the world, that music was recorded with the use of machines. Music of that time could be written down with score-sheets of ink on paper, or even punched into paper, on piano rolls for mechanical pianos. But this was the first time that a musician's own musical performance could be turned into a technical artifact, a commodity, and sold on wax cylinders.

This was the start of the recorded music industry. It was a culturally shocking invention rather like AI in some ways. When music was recorded, interactions that had always been human were dehumanized.

Artificial words appeared. Artificial voices. Artificial speech. There had never been any. The first commercial uses for recordings were efforts to get dolls to talk. Artificial children, talking aloud. Also, dead people could talk. You could see them buried, and yet still hear the voices of the dead. It was like the modern shock of talking to an AI chatbot — it can hear speech and speak, it can read and write. It's an artificial intelligence. A wax recording is an artificial voice.

Musicians had the sense that something important had been stolen from them. Because that was true. A musical "band" was a band of people. And an audience was a gathering of people. Those people gathered with a purpose to see the band unite and perform the music. The effort of the band moved the audience, who responded with dancing and applause. Music was a human act of unity. It came from musical instruments and written musical scripts, but it also came from human breath and bodies, and costumes, and with rituals, in specific places, concert halls, bands marching in the streets. That human endeavor was degraded and under threat. Because it was mechanically reduced to some grooves in a cheap piece of wax.

Music didn't die in these technical traumas. If you wanted to hear music, there was vastly more music than ever before. But there was also a genuine loss of humanity, and a genuine artificiality to this technologized music.

...all that drama happened long before we were born, so it's easy to shrug and feel used to it. The fire-born are at home in fire, and we live in that fire. In the modern condition of AI that bonfire is blazing right now. People are anxious about losing their jobs to AI. People are anxious about losing their identities to AI.

...you could say that jazz was infrastructural — that music from the town of New Orleans could be recorded, and exported, and transmitted on the radio. There were new forms of mass media, so it was easier to spread a viral fad, around the world. So, that somehow explains jazz.

...It's pretty clear to me that the generation of AI — and it's been going on for ten years, it's a generation — has a lot of that unspoken Jazz Age anguish. It's a vivid displacement activity for a lost and troubled era.

I'm a novelist, so I notice the peculiar emotional expressions here. I notice things like AI burnout, AI psychosis, unhealthy relations with imaginary boyfriends and girlfriends, AI fakes, stock market bubbles, and the fear of missing out. That stark fear. So much fear. The fear of missing that golden chance. Also, the fear that AI is real this time, and is really happening. The apocalyptic terror that AI will lead to the destruction of the world.

This is not the cyberpunk dystopian dark side of AI. This is the propulsive force of AI. It's the restless and itchy drive that forces you to leave your apartment and rush downtown to the jazz club.

...This is the high summer of AI. The scene is red hot. I've been aware of AI for my entire, extensive lifetime, and it's never been this technically intense and this deeply felt. Rational people, with education and money and power and experience, are cracking up in public. They're losing their heads over it. Billionaires, captains of industry, politicians, military, spies. Worldwide. Old and young, men and women.

It's a craze.

I'm very interested in it. I follow its every little up and down. It is so far out and science fictional that it might have been built just to entertain elderly cyberpunk writers. I do not invest in it. I'm not selling any of it to you. I don't use it much personally. It isn't changing my life — not much as yet. I'm not afraid of it. I don't even think it will last. It's defining an era, an era which is ten years old and counting, but something else will show up. AI is not a fraud, or pretense, or a fake. It's a real and powerful technology and we're never going back to the way things were. I recognize all that, but also, I take consolation in continuity.

...So he's this Belgian guy: Guillaume Du Fay. He's from nowhere, and his father is a priest, so he's a bastard child. So, two major personal problems. However, he's a musical genius. His music teachers just give him their precious, hand-written musical books, as personal gifts. At age 16 he's a full-time musician. Music is the life for him.

He's very involved with the New Music, which is "Ars Nova," literally the new art of music. Ars Nova is like the older Gregorian chant music, but it's got "isorhythmic motets." This is not simple folk music — Mom can't sing it to the baby while she's making pie. Isorhythmic motets have to be composed. A composer has to write them down on paper in musical notation, and study them in order to get them to work.

Guillaume Du Fay can play the organ and he can conduct a choir. But he's the first musician in the world who is a professional composer. People actually pay him to compose new music in a form in which he's the expert and the creative leader. They want to hear his Ars Nova music because it's innovative and it's like no music they ever heard.

He's got imitators — of course. They're not as good as him. They can't get the isorhythmic motets to swing right. They've got distinct rhythms: the melody and the vocals, and the vocals are repeated and they slide in and out of phase with the music. If you don't know what you're doing it sounds awful, but if you're Guillaume Du Fay you can do anything with it.

And everybody knows that. He's the greatest musician in the world, and everybody agrees about that. In Florence they build the Dome of Brunelleschi. This Florentine cathedral is a sacred building and the biggest building in a thousand years. It's a Renaissance building, and still standing there right now in Florence, today. When it was brand new, it was already a famous building.

They're going to cut the ribbon and let the crowds in. Somebody has to write the theme music for this cathedral. Of course it's him. "Guglielmo Du Fay," the most famous musician in Italy, or the world, even. It's a musical commission, but he gets the job done and he's better than anyone else. The music exists and is admired now just like the stone cathedral exists and is admired now. It's rock solid music.

Later he becomes a priest — because his dad was a priest, so that's not gonna slow him down much. As a priest, he becomes the choir master and top musician for the Pope. He's at the Vatican, the center of the world. All roads go there, and he's composing the music. He has the greatest music job that is possible. He's the finest musician of his lifetime and everybody agrees about that for the next six hundred years.

So what happens to Du Fay? Well, the Pope gets in political trouble. There's another pretender Pope, and also the population of Rome has a violent riot and they chase the Pope out of town. So Du Fay has to run for his life. This twist of fate must have really been annoying.

...AI is a fad, but not merely a fad. You can't merely wait for it to blow over, and imagine that things will be as they were. A lot of it will blow over, but also a lot of things will be blown flat by it. Important matters, customs, infrastructures, cherished ways of life, gone with the wind and never replaced.

4iv26

Gemini AI Deep Research is Now INSIDE NotebookLM Alex Tucker at Medium '

5iv26

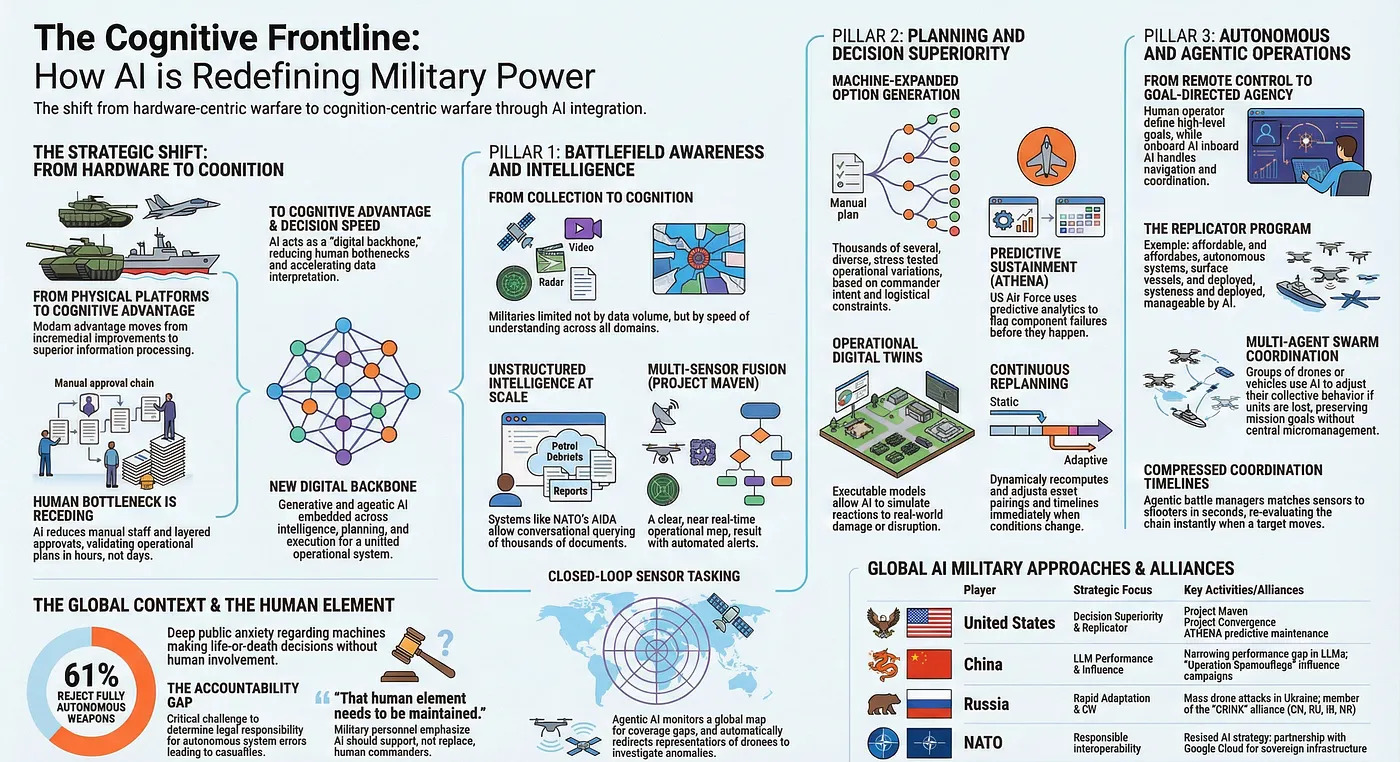

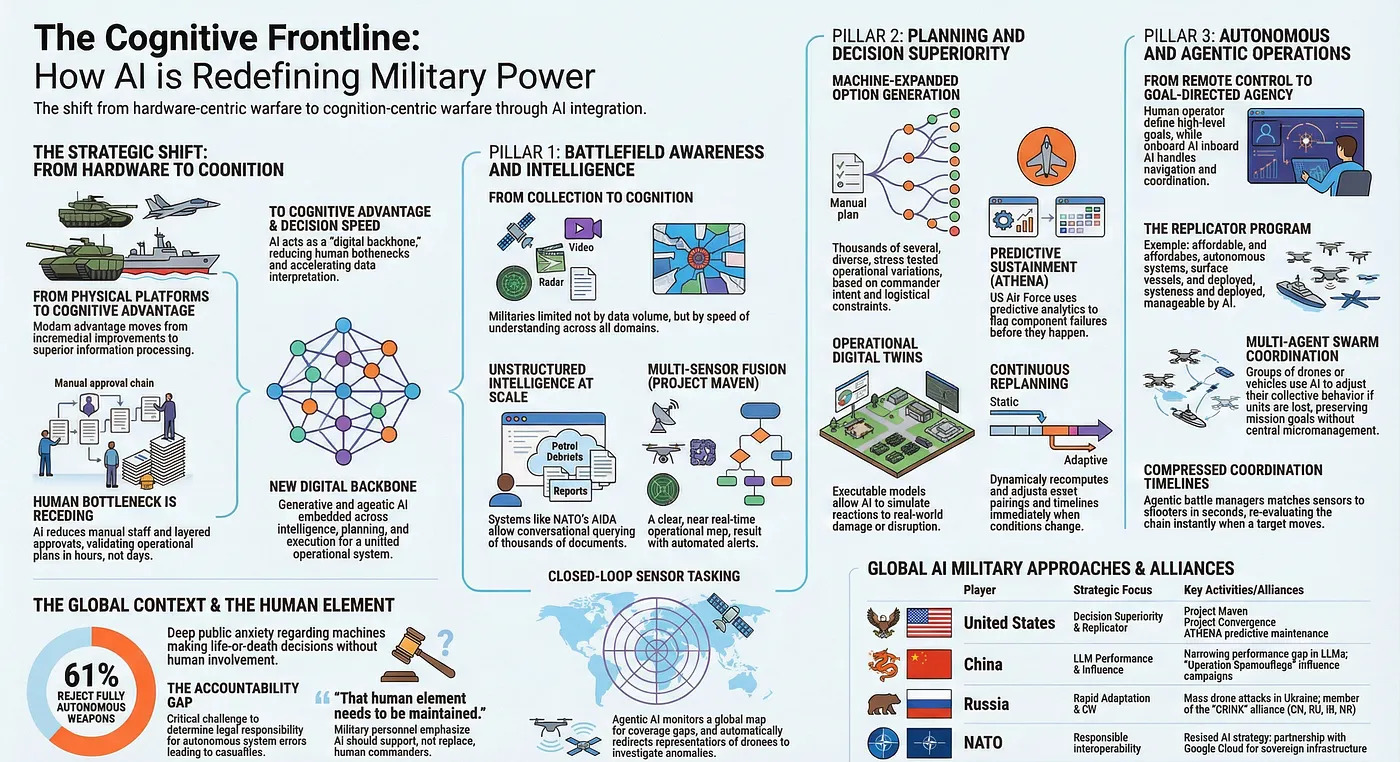

Forget killer robots: 3 ways AI is really revolutionizing modern warfare Laura Verghote at Medium

...AI is reshaping how militaries understand situations, plan operations, and execute missions across the full lifecycle of combat power.For most of modern history, military advantage came from better physical platforms and incremental hardware improvements such as thicker armor, longer range, and faster engines. Decision making depended on large staffs, manual analysis, and layered approval chains. Building and updating capability often took years or decades, and operational plans could take days to assemble and validate.

That foundation is shifting from platform advantage to cognitive advantage. Warfare is becoming less hardware centric and more cognition centric, driven by information processing, planning speed, and coordinated execution. The human bottleneck in analysis and staff work is being reduced. Modern forces increasingly rely on AI to accelerate how they interpret data, generate options, and coordinate action.

Claude Code: Everything you need to know in 8 minutes or less James Wilkins at Medium

...While it may have been designed as a coding assistant, it's really just an agent capable of managing your files, using skills, and writing and running code (all by itself) to solve whatever problem you throw at it.Claude Code is one of the more capable AI tools currently available, and you do not need a programming background to get real value from it. This guide covers everything from the basics of how it works through to its most advanced features, so you can decide how far you want to take it.

...It can read files; write, edit and run code; install software, and build things from scratch. You describe the goal in plain English; Claude figures out how to achieve it.

Claude Cowork: The library that contains everything and reveals nothing Mihailo Zoin

In the abbey library of Umberto Eco's The Name of the Rose, every book exists. The collection is vast, catalogued by someone, at some point, under some system. Brother William of Baskerville knows the text he needs is inside those walls. The problem is not absence. The problem is architecture.The library is a labyrinth. The rooms connect, but not in ways that correspond to how a reader thinks. The books are physically present. They are operationally invisible.

William does not have a knowledge problem. He has a placement problem.

...In Eco's novel, the blind monk Jorge designed the library not to facilitate discovery but to control it. The labyrinth serves the builder's logic, not the seeker's.

...William of Baskerville did not solve the library by reading every book. He solved it by understanding the architecture that determined which books he could reach.

6iv26

Engineering Storefronts for Agentic Commerce Heiko Hotz at O'Reilly

...The emerging standard for solving this is the Universal Commerce Protocol (UCP). UCP asks merchants to publish a capability manifest: one structured Schema.org feed that any compliant agent can discover and query. This migration requires a fundamental overhaul of infrastructure. Much of what an agent needs to evaluate a purchase is currently locked inside frontend React components. Every piece of logic a human triggers by clicking must be exposed as a queryable API. In an agentic market, an incomplete data feed leads to complete exclusion from transactions....Agents are now browsing your store alongside human buyers. Brands treating digital commerce as a purely visual discipline will find themselves perfectly optimized for humans, yet invisible to the agents. Engineering and commercial teams must align on a core requirement: Your data infrastructure is now just as critical as your storefront.

AI has limits, even if many AI people can't see them Henry Farrell

Benjamin Recht's The Irrational Decision: How We Gave Computers the Power to Choose for Us>/b>...Towards the end of his new book, The Irrational Decision, Ben Recht explains what he has set out to do.Most books on technology either take the side that all technology is bad, or all technology is good. This isn't one of those books. Such books focus too much on harms and not enough on limits. Limits are more empowering. Throughout the book, I've maintained that mathematical rationality is limited in what kinds of problems it is best placed to solve but has sweet spots that have yielded remarkable technological advances.It may be that more books on technology escape the good-bad dichotomy than Ben allows. Even so, I haven't read another book that is nearly as useful in explaining why and where the broad family of approaches that we (perhaps unfortunately) call AI work, and why and where they don't. Ben (who is a mate) combines a deep understanding of the technologies with a grasp of the history and ability to write clearly and well about complicated things. I learned a lot from this book. Very likely, you will too.

...Neither AI Rationalism or AI-Con Thought is all that helpful in explaining the technologies we confront right now. The former tends to launch into fantasy, repeatedly demonstrating how starting from ridiculous premises allows you to reason your way to ridiculous results. The latter tends to curdle into denialism, claiming ever more loudly that disliked technologies are useless even as they find ever more uses. We ought to be much more worried about the claims of the triumphalists than the denialists, since they are far more influential. But to successfully deflate their claims, we need a more grounded perspective on what AI and related technologies are capable of than can be provided by the denialists.

The Irrational Decision provides strong reasons for skepticism about the grander aspirations of the rationalist project, while explaining why machine learning has remarkable uses in its appropriate domain. Those who are embroiled most closely with the rationalist project have a hard time understanding its limits because those limits shape their own world view. The one weird trick of rationalism is to recompose complex problems in terms that can readily be rationalized. When that is good, it is very, very good, but when it is bad, it is horrid. To understand this, it's first necessary to understand where rationalism comes from.

...The story he tells is necessarily messy, but some important broad themes emerge, most importantly around the development of optimization theory. Linear programming makes it possible to find optimal ways to allocate resources within a limited budget so long as the constraints are linear (when they are not, all computational hell can break loose). Optimal control theory allows a control system to adjust optimally to its environments (again, under restrictive assumptions about the constraints). Game theory can postulate — and often even discover - optimal strategies to play against opponents in strategic situations. These toolkits overlap with others. A family of techniques, ranging from simulated annealing to the ancestral forms of the gradient descent/backpropagation that "deep learning" relies on, provides ways to discover superior local optima in more complex situations. Randomized clinical trials (RCTs) provided possible ways to discover whether a given intervention (a drug; a policy measure) worked or not.

All of these approaches suggest the superiority of technical forms of analysis over human judgments. RCTs apply protocols and statistical analysis to try to discover causal relationships (according to the standard story), or justify interventions (according to Ben's). Other approaches involve the discovery of optimal solutions, given convenient mathematical assumptions and simplifications. Others still involve the discovery of local optima (that is: solutions that are better than others that are readily visible in their neighborhood), which may be better than those that ordinary humans could reach.

...Understanding mathematical rationalism helps us understand the strengths and limitations of AI. It isn't just a form of rationalism, but the combined application of a variety of long established rationalist techniques - neural nets (which go back to the 1950s), statistical learning and backpropagation, made possible by more powerful computers and enormous amounts of readily available data. Claude Shannon's methodology for modeling language, which is the intellectual basis of "large language models" is "an instance of statistical pattern recognition" or machine learning. And machine learning itself is no more and no less than a powerful statistical tool. I found this passage maybe the most clarifying explanation of what it does that I've ever read.

To frame the prototypical machine learning problem, I like to think about a hypothetical spreadsheet. Each row of the spreadsheet corresponds to some unit or example. But I don't care what the units mean. I just know that I have a bunch of columns filled in with data. And I'm told one of the columns is special. I am about to get a load of new rows in the spreadsheet, but someone downstairs forgot to fill in the special column. Management has tasked me with writing a formula to fill in what should be there. For whatever reason, I don't get to see these new rows and have to build the formula from the spreadsheet I have. The formula can use all sorts of spreadsheet operations: It can assign weights to different columns and add up the scores, it can use logical formulas based on whether certain columns exceed particular values, it can divide and multiply. ... I'll do an experiment. I'll take the last row of my spreadsheet and pretend I don't have the special column. I'll write as many formulas as I can. ... But why single out that last row? I can do something similar for every row! I'll invent a set of plausible functions. I'll evaluate how well they predict on the spreadsheet I have. I'll choose the function that maximizes the accuracy. This is more or less the art of machine learning.Stochastic Lobsters, Token Tsunamis, & the Spinning-Up of Isaac576Bot Brad DeLong

...appearing to work because much more of human language than we like to think is formulaic parrotage

7iv26

AI is creating the first generation of cognitively outsourced humans Enrique Dans at Medium.

For years, we have been outsourcing pieces of cognition so gradually that the shift barely registered. We outsourced memory to search engines after the well-known "Google effect" showed that when people expect information to remain accessible online, they are less likely to remember the information itself and more likely to remember where to find it. We outsourced navigation to GPS, even as research began to show that heavy reliance on it can weaken spatial memory when we have to find our own way. And we outsourced more and more of our social coordination to platforms that decide what we see, when we respond, and how we stay in sync with one another.Now we are beginning to outsource something far more consequential: not memory, not route-finding, not scheduling, but thought itself. Or, more precisely, the labor of forming a judgment before expressing one.

That is the real cultural shift hidden behind the current enthusiasm around generative AI. The technology is often presented as a productivity layer, a creativity booster, or a universal assistant. And yes, in many cases it is all of those things. But it also creates a dangerous temptation: to confuse frictionless output with actual understanding, and fluent answers with earned judgment.

...Psychologists call it cognitive offloading: shifting mental work onto an external aid. A shopping list is cognitive offloading. A calculator is cognitive offloading. So is a calendar, a notebook, or a reminder app. In that sense, there is nothing inherently new or sinister here. Human beings have always built tools that extend the mind.

...When we outsource storage, we preserve effort. When we outsource navigation, we reduce uncertainty. But when we outsource judgment, we risk weakening the very faculty that allows us to decide whether the machine is useful, misleading, biased, shallow, manipulative, or simply wrong.

...Here is the paradox that most people still miss: the individuals who will benefit most from AI will not be the ones who use it for everything.

They will be the ones who know when not to use it.

That is not a romantic defense of artisanal thinking. It is a practical argument about leverage. People with strong judgment, clear domain knowledge, and disciplined skepticism can use AI to move faster without surrendering authorship. They can interrogate outputs, test assumptions, compare alternatives, and notice when the machine is glossing over ambiguity or inventing certainty. People without those habits are more likely to accept the first plausible answer and move on.

Radar Trends to Watch O'Reilly

AI has moved from a capability added to existing tools to an infrastructure layer present at every level of the computing stack. Models are now embedded in IDEs and tools for code review; tools that don't embed AI directly are being reshaped to accommodate it. Agents are becoming managed infrastructure.At the same time, two forces are reshaping the economics of AI. The cost of capable AI is falling. Laptop-class models now match last year's cloud frontiers, and the break-even point against cloud API costs is measured in weeks. The competitive map has also fractured. What was a contest between a few Western labs is now a broad ecosystem of open source models, Chinese competitors, local deployments, and a growing set of forks and distributions. (Just look at the news that Cursor is fronting Kimi K2.5.) No single vendor or architecture is dominant, and that mix will drive both innovation and instability.

...The technical transitions are easy to talk about. The human transitions are slower and harder to see. They include workforce restructuring, cognitive overload, and the erosion of collaborative work patterns. The job market data is beginning to clarify: Product management is up, AI roles are hot, and software engineering demand is recovering. The picture is more nuanced than either the optimists or the pessimists predicted.

8iv26

Anthropic's New Model Is So Scarily Powerful It Won't Be Released, Anthropic Says gizmodo

The bottleneck shifts to distribution Gordon Brander, Squishy (via Stephen Downes)

AI Is Really Weird Ed Zitron

...despite everybody talking about the hundreds of gigawatts of data centers being built "to power AI," only 5GW are actually "under construction," with "under construction" meaning anything from "we've got some scaffolding up" to "we're about to hand over the keys to the customer."...Probably the weirdest thing about this entire era is how nobody wants to talk about the fact that AI isn't actually doing very much, and that AI agents are just chatbots plugged into an API.

...2025 was meant to be the year of AI agents, but turned out to be the year of talking about AI agents. Agents were/are meant to be autonomous pieces of software that go off and do distinct tasks.

In reality, it's kind of hard to say what those tasks are. "AI agent" now refers to literally anything anybody wants it to, but ultimately means "chatbot that has access to some systems."

...The word "agent" is meant to make you think of powerful autonomous systems that carry out complex and minute tasks, when in reality it's ...a chatbot. It's always a fucking chatbot. It might be a chatbot with API access or a chatbot that generates a plan that another chatbot looks at and says something about, but it's still chatbots talking to chatbots.

When you strip away the puffery, nobody seems to actually talk about what AI does.

...AI agents do not, as sold, actually exist. Every "AI agent" you read about is a chatbot talking to another chatbot connected to an API and a system of record, and the reason that you haven't heard about their incredible achievements is because AI agents are, for the most part, fundamentally broken.

...LLMs are good at writing a lot of code, not good code, and the more people you allow to use them, the more code you're going to generate, which means the more time you're either going to need to review that code, or the more vulnerabilities you're going to create as a result. Worse still, hyperscalers like Meta and Amazon are allowing non-technical people to ship code themselves, which is creating a crisis throughout the tech industry.

Worse still, LLMs allow shitty software engineers that would otherwise be isolated by their incompetence to feign enough intelligence to get by, leading to them actively lowering the quality of code being shipped.

...Encouraging workers to burn as many tokens as possible is incredibly irresponsible and antithetical to good business or software engineering. Writing great software is, in many cases, an exercise in efficiency and nuance, building something that runs well, is accessible and readable by future engineers working on it, and ideally uses as few resources as it can.

TokenMaxxing runs contrary to basically all good business and software practices, encouraging waste for the sake of waste, and resulting in little measurable productivity benefits

...TokenMaxxing is not real demand, but an economic form of AI psychosis. There is no rational reason to tell somebody to deliberately burn more resources without a defined output or outcome other than increasing how much of the resource is being used. I have confirmed with a source at that there is no actual metric or tracking of any return on investment involved in token burn at Meta, meaning that TokenMaxxing's only purpose is to burn more tokens to go higher on a leaderboard, ...TokenMaxxing literally encourages you to do the opposite — to use whatever you want in whatever way you want to spend as much money as possible to do whatever you want because the only thing that matters is burning more tokens.

...Though the term is pretty new, the practice of encouraging your engineers to use AI as much as humanly possible is an industry-wide phenomena, especially across hyperscalers like Amazon, Microsoft and Google, all of whom until recently directly have pushed their workers to use models with few restraints. Shopify and other large companies are encouraging their workers to reflexively rely on AI, with performance reviews that include stats around your token burn and other nebulous "AI metrics" that don't seem to connect to actual productivity.

...one of the weirdest parts of the whole AI bubble: the possibility of something existing is enough for the media to cover it as if it exists, and a product saying that it will do something is enough for the media to believe it does it.

AI-Infused Development Needs More Than Prompts Markus Eisele at O'Reilly

...Most of the attention goes to code generation. Can the model write a method, scaffold an API, refactor a service, or generate tests? Those things matter, and they are often useful. But they are not the hard part of enterprise software delivery. In real organizations, teams rarely fail because nobody could produce code quickly enough. They fail because intent is unclear, architectural boundaries are weak, local decisions drift away from platform standards, and verification happens too late.That becomes even more obvious once AI enters the workflow. AI does not just accelerate implementation. It accelerates whatever conditions already exist around the work. If the team has clear constraints, good context, and strong verification, AI can be a powerful multiplier. If the team has ambiguity, tacit knowledge, and undocumented decisions, AI amplifies those too.

That is why the next phase of AI-infused development will not be defined by prompt cleverness. It will be defined by how well teams can make intent explicit and how effectively they can keep control close to the work.

9iv26

What Happens When AI Gets Too Good at One Thing Alberto Romero

...Given a piece of software—a browser, an operating system, whatever—Mythos, which by the way destroyed every coding and agents benchmark out there, can find old, immediately exploitable vulnerabilities that not even the developers knew existed, with minimal human steering. And then it can go and figure out how to exploit them. From this moment, no software system in the world is safe to the extent that this technology is possible (in practical terms, only 3-5 players will be able to build this kind of system in the next few months, and none outside the US).

10iv26

When AI stops being a tool and becomes a job requirement Enrique Dans at Medium

We are in the midst of a transformation that is no longer just about productivity, automation or "new tools". As an article in the Wall Street Journal explains, AI in the workplace is no longer simply an additional resource: in technology companies, AI skills are an explicit requirement, incorporated into performance evaluations, selection processes and daily performance expectations.This new reality changes the nature of the debate. When a tool is now mandatory, it ceases to be just technology and becomes a form of work discipline. The question is no longer whether ChatGPT, Copilot or the agent on duty help you work better, but what happens when the company decides that your professional value depends on your willingness to integrate them into each task. The Wall Street Journal article describes this quite clearly by documenting how big tech companies and service companies are beginning to measure the use of AI, to reward it and, in some cases, to penalize employees who don't use it. This is not corporate fashion, but rather the construction of a new standard of work normality.

Naturally, this normality is sold under the most predictable packaging in the world: "adaptation", "efficiency", "future of work", "upskilling". Google, for example, has launched a whole narrative about how AI drives growth, training and competitiveness, while Salesforce says there's been a huge increase in the use of AI among knowledge workers, along with a growing expectation among employers that teams can redesign templates and functions around agents and automation. Companies that have invested billions in AI need to demonstrate that its internal adoption is real, irreversible and exemplary.

The problem is that, as always, the costs of this supposed modernization are not distributed evenly. The OECD has been warning for some time that algorithmic management in the workplace not only organizes tasks; it also monitors, classifies, recommends, scores and conditions professional autonomy.

LeWorldModel: 15 Million Parameters to Map the World evoailabs at Medium

...The AI research landscape just shifted. On March 13, 2026, a team including Yann LeCun and researchers from Mila and AMI Labs released a paper titled "LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels".For years, LeCun has argued that Large Language Models (LLMs) are a "dead end" for true AGI because they lack a "world model" — an internal understanding of physics and causality. LeWorldModel (LeWM) is the implementation of that philosophy. Here is an analysis of why this paper is being hailed as the "Physical AI" breakthrough of 2026.

...The "secret sauce" of LeWorldModel is a regularization technique called SIGReg (Sketched-Isotropic-Gaussian Regularizer).

...LeWorldModel proves that physical intelligence does not require massive scale. By using 15M parameters and a single GPU, the researchers achieved what was previously thought to require billions of parameters and thousands of H100s.

...For the Industry: It signals the end of the "Generative AI" monopoly. We are moving from models that generate (predicting the next token) to models that understand (predicting the next state of the world).

The Verdict: If 2023 was the year of the Chatbot, 2026 is becoming the year of the World Model. LeWorldModel is the blueprint for how AI will finally step out of the computer screen and into the physical world.

Why ontology, identity, and memory are reshaping enterprise AI in 2026 Akash Goyal at Medium

...The problem is that we no longer agree on what things are, which things are the same over time, or why decisions were made in the first place. That is why ontology, memory, and identity are suddenly back in the spotlight. Not as theory. Not as fashion. But as survival infrastructure.2026 is shaping up to be the year this becomes unavoidable.

Executives are asking for ontology strategies. Vendors are shipping semantic platforms. Product decks are full of new phrases like context graphs, decision fabrics, and meaning layers.

...Ontology is not about naming concepts. It is about deciding what exists inside a system and what actions are allowed to happen because of that existence... Many organizations believe they have ontology because they have schemas or taxonomies. Those help, but they do not unify reality. You cannot connect an enterprise by agreeing on labels alone. Meaning must be anchored to real entities: customers, assets, contracts, events, and decisions that actually occur.

When ontology floats above reality, systems look clean on paper and fail in production.

AI does not tolerate that gap....In 2025, the focus was reasoning.

In 2026, the fight moves to memory.

AI systems are now expected to remember context, learn from experience, and improve over time. That sounds helpful until memory starts accumulating without structure.Ontology tells you what exists.

It does not tell you why reality looks the way it does today.

That explanation lives in memory....Memory is not storage. It is narrative.

...Without clean semantics, stable identity, and governed memory, custom models simply become faster guessing machines trained on inconsistent reality. They may perform well locally while increasing systemic risk elsewhere.

Most organizations do not need smarter models.

They need cleaner meaning....A quiet architectural shift is already underway.

Neural models infer. Ontologies constrain. Memory stabilizes behavior over time.

Ontology is no longer just about search or retrieval. It defines what actions are allowed, what workflows can run, and what authority exists inside the system.

This is why ontology ownership is becoming strategic. Whoever controls meaning controls behavior.

Extracting data is easy.

Extracting meaning is not.

Worried About A.I. Taking Your Job? That's Not Very Agentic' of You. Nitsuh Abebe at NYTimes

There are various reports and they all seem to agree: The tech world is currently awash in the concept of agency. It is, more specifically, extremely into the word "agentic," which peppers the language of the tech-associated, the tech-adjacent, the tech-adjacent-adjacent.That's "agentic" as in, you know, having agency — possessing the capacity “to influence and control outcomes through assertive individual action,” as the Oxford English Dictionary has it. The word holds a lot of meaning in computing, but Silicon Valley aspirants seem just as eager to apply it to themselves. They talk about being agentic people; sometimes they dress up the idea in a little rhetorical suit and talk about the Highly Agentic Individual. They are describing the kind of person who simply acts, assertively, to shape the world, rather than seeking approval or meekly following the herd. Candidates for tech jobs get asked if they're agentic (good) or mimetic (yuck). On X, people debate whether the platform's owner, Elon Musk, is in fact "the most agentic person alive." One poster laments the way a cold can ruin your workday: "You won't make any deals, you won't be an agentic person. You're milquetoast." Another just needs an adequately agentic aide to help schedule medical appointments.

...Today's futurist spin is not so vastly different — except that it adds, predictably, the self-regarding will-to-power fantasies that seem endemic to tech culture. The language often echoes familiar dreams of becoming a rule-shattering visionary, a rugged-individualist lion instead of a placid, blue-pilled, normie sheep. There is an obvious and proximate reason you would find agency coursing through tech right now: The industry is currently adding agency to A.I. The field is graduating from generative models and chatbots to A.I. "agents" — models meant to act on their own, pinging through the digital world making plans, purchases, decisions. People buzz about agentic coding, agentic commerce, agentic dating, a whole agentic internet; anything you do on a computer could be done by a computer.

... There's a common prediction among those hoping to outstrip their ovine, mimetic peers: The A.I. models, they say, will eventually supply all of the effort, training and expertise that have historically stood between humans and our ability to simply make things happen. Once all those annoyances are pushed out of the way, the only thing separating the world's winners from its losers will be the sheer motivation to act, the raw ambition to do a thing — pure, bold agency. You run across this notion all sorts of places, sometimes as a frantically held belief and sometimes as a sales pitch. A sufficiently agentic layperson, someone argues on X, could sit down with an A.I. and produce the same work as a Ph.D student. "In the age of A.I., becoming a super agentic individual is a superpower"; "Being a highly agentic individual is going to be so important with the rise of A.G.I."; "In the A.I. era, become an 'agentic individual' to thrive! Discover how work evolves with A.I. agents. Don't get left behind!" All that will matter is your own superior drive and will; every other aspect of achievement can be handled, as if by magic, in the guts of some colossal data center.

Why Andrej Karpathy's LLM Wiki is the Future of Personal Knowledge evoailabs at Medium

...The LLM Wiki pattern marks a fundamental maturity in how we use AI. We are moving away from treating LLMs purely as search engines or text generators, and finally starting to use them as tireless librarians and system maintainers. By shifting the workload of documentation to the AI, we finally unlock the dream of a compounding "Second Brain" that actually takes care of itself.

Tiny AIs, Finally Ready? Toward Affordable AIs Ignacio de Grigorio 5iv26 at Medium

...But what is a computer? It's just a machine that executes mathematical operations. To make these operations, it uses compute processors. However, these need data. And data is stored in memory.So, effectively, a computer is just a set of memory chips that send and store instructions and data, which are fetched, decoded (the instruction is interpreted), and executed by the computer's processor/s. The results are then stored back into memory, and the cycle repeats.

...Historically, the compute worker was the bottleneck. That is, we had plenty of data being provided to the compute worker, but it wasn't fast enough.

This, coupled with Moore's Law still in effect (compute density doubling every 2 years), put all the scaling pressure on compute, which saw remarkable expansion over several decades.

Importantly, as compute power was the bottleneck, this allowed the memory chips to improve at a much slower rate.

And it wasn't only a matter of technology, but also of supply chains. While the manufacturing of compute chips led to the surge of a huge number of new processor chip factories, our manufacturing capacity of memory chips paled in comparison.

...But then came Generative AI, and suddenly, what we thought was true was no more.

...While our modern AI hardware is extremely powerful on the compute side, its memory bandwidth (the ability to move data in and out of memory quickly) has lagged considerably.

This has led to the grim picture that is AI (especially inference) today, one where memory is holding everyone back.

...The issue is that, put mildly, we need a lot of bytes in AI. Models today are comfortably above the 200-billion-parameter range, with frontier models comfortably above the several-trillion-parameter range.

Yes, that means what you think it means: a model can have 2,000,000,000,000 parameters. For reference, it's estimated that the world has 3 trillion trees, so there are models at your disposal today with more parameters than there are trees on planet Earth.

...The reason AI is very expensive is ironically not because it's incredibly expensive to run, but because it's incredibly expensive to build.

In an AI data center, roughly 90% of total cost of ownership is capital costs (purchasing hardware, securing power, and labor and construction).

It's not like AI cannot be served cheaply; it's that the companies building these data centers are under enormous investor and debt pressure to guarantee they get a return on an incredibly expensive project.

Which is to say, data center-based AI will never be cheap as long as capital costs are so high

AI Is Eating Its Own Tail And Biting The Hand That Feeds It Will Lockett at Medium

The AI information economy Is screwed.I have called the AI boom a death cult before because it is in so many different ways. From its total lack of financial sustainability to its horrific environmental impact to the veritable psychopathic psychosis of the tech bros pushing this technology onto us, every decision made is an almost fetishistic attempt to dominate us and prove to Daddy Shareholder that they are still the golden child. But the elephant in the room is the other way AI is spiralling towards destruction. You see, AI is choking the data economy to death, which is the foundation that the generative AI industry depends on, in two distinct ways.

(1)...Now, here's the thing: these publishers need traffic to generate the income that sustains them. Less traffic means less money. Less money means these sites produce less content. That means that these AIs will have less valuable data to be trained on.

Can you see the problem here? AI is biting the hand that feeds it.

Apparently, using AI to replace human connections crushes the human output AI depends upon. Who would have thought?

(2)...weird things begin to happen when you train an AI on AI-generated dataYou see, AI-generated content contains tiny, almost indistinguishable trends that human-generated content doesn't have. That is why we feel we can spot when AI has written something. If you then start feeding this data back into the AI model, it will place more and more weight on these non-human trends. At first, this looks like the AI becoming less capable, but if you continue feeding the AI its own output, it will eventually place more weight on these generated trends rather than the human ones, causing the model to collapse and produce gibberish. We call this "model collapse," and it is a surprisingly well-studied phenomenon.

...According to Axios, by the middle of 2025, over half of the content being posted online was AI-generated.

...Put simply, AI companies are using considerable amounts of web-scraped data to train their AI. But more than half of the content posted online is AI-generated, and AI detection tools fail to consistently filter out AI content and incorrectly filter out human-made content. This means that today's AIs are being trained on their own output, or even the output of their predecessor models (which also causes model collapse). This isn't a hypothetical problem; researchers have found a very real, tangible risk that current AI models are headed toward model collapse.

...AI companies have polluted the web with a tsunami of AI-generated content. Naturally, the very data these AI models depend on is being contaminated beyond recognition, which contaminates the AI themselves and sets off a vicious cycle. Not only that, but the sheer volume of AI-generated slop online is drowning out interesting, diverse and valuable human voices, reducing the internet to a bland monoculture. Of course this is horrific news for us humans, but it ain't good for these AIs either, as it means there is most likely less "high-quality" human data being posted online than before.

It's almost like it was a bad idea to unleash an unregulated plagiarism machine that is, at best, a hollow and deeply flawed attempt to mimic humanity onto the world

In layman's terms, the AI information economy is detrimental to everyone, including AI companies. It is crushing and robbing blind the very people producing the data it depends on and is flooding the internet with so much slop it is at risk of destabilising itself. This is a totally unsustainable situation. Can it be solved? Yes, regulation, copyright laws and copyright reform could all help. But these solutions involve taking power away from Big Tech, which is becoming harder by the day. It seems we are all trapped in yet another downward spiral. The question is, will we take the steps to escape before it is too late?

AI Will Be Met With Violence, and Nothing Good Will Come of It Alberto Romero

The first thing you learn about a loom is that it's easy to break. The shuttle runs along a track that warps with humidity. The heddles hang from cords that fray. The reed is a row of thin metal strips, bent by hand, that bend back just as easily. The warp beam cracks if you over-tighten it. The treadles loosen at the joints. The breast beam, the cloth roller, the ratchet and pawl, the lease sticks, the castle; the whole contraption is wood and string held together by tension. It's a piece of ingenuity and craftsmanship, but one as delicate as the clothes it manifests out of wild plant fibers. It is, also, the foundational tool of an entire industry, textiles, that has kept its relevance to our days of heavy machinery, factories, energy facilities, and datacenters.It is not nearly as easy to break a datacenter.

It is made of concrete and steel and copper and it's on the bigger side. It has interchangeable servers, and biometric locks and tall electrified fences and heavily armed guards and redundancy upon redundancy: every component duplicated so that no single failure brings the whole thing down. There is no treadle to loosen or reed to bend back.

But say you managed to bypass the guards, jump the fences, open the locks, and locate all the servers. Then you'd face the algorithm. The datacenter was never your goal; the algorithm lurking inside is. It doesn't run on that rack, or any rack for that matter. It is a digital pattern distributed across millions of chips, mirrored across continents; it could be reconstituted elsewhere, and it's trained to addict you at a glance, like a modern Medusa.

...The algorithm was also not your goal; the vibrant, ethereal, latent superintelligence lurking inside is. Well, there's nothing you can do here: It always "gets out of the box" and, suddenly, you are inside the box, like a chimp being played by a human with a banana.

Mythos, the AI too powerful to be released? Ignacio de Gregorio at Medium

... This model could allegedly break the Internet and basically every piece of software it's exposed to.So, is the world as we know it about to change, or is this the ultimate marketing stunt?

...In Anthropic's own words, Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser, and it presents the model as one that can surpass all but the most skilled humans at finding and exploiting software flaws.

23 Questions Every Heavy AI User Should Ask Alberto Romero

...I've been using AI tools every day for over five years now. I research with AI, brainstorm with it, edit with it, and build personal software tools with it. I'm not yet in the multi-agent stage, but it will eventually happen. My current project is akin to Karpathy's wiki/knowledge base, but for the entire archive of writing, reading, and interesting stuff that I've been capturing and classifying for years, rather than research....Yesterday—because I'm too methodical or hedge too much—I sat down to write something without relying on AI assistance from beginning to end—research, editing, grammar, etc.—just to see how it felt, and realized a tinge of vertigo. Not having an AI-enabled “safety net” made me feel weirdly vulnerable (in case you don't know, English is not my first language, and so I make typos, confuse words, and misuse expressions, etc., at a higher rate than normal).

My work was slower and less comfortable, and I kept reaching for a Claude tab. Imagine you had to work for a day without the internet, it'd be supremely restricting, right? But AI is so new! How is this happening to me already?

My answer is that, for most heavy users using AI for serious work, this shift is going to happen for AI much faster than it did for the internet or the phone. These tools reward speed, so here we are, with a superpower in our hands, doing more than ever, faster than ever, always monitoring the next release or announcement.

...it's not the people who use AI the most who will reach further, but those who start to ask the right questions about the best practices. Those who dedicate 5% of their time to reflect.

This is, it turns out, an old problem with an old solution: the checklist.

...a prototype, a personal checklist I've done for myself, to keep me on track. The broad idea is to make the user reflect on the use of AI and improve it from the very act of asking these questions (also from the answer, of course). These questions will evolve, change, and I will add new ones, but it's a starting point that I think can be useful for you as well.

Steal it and run it once a month. Even a quick skim is better than nothing!

Eventually, you will want to iterate on these questions as you see fit. This is not intended to be a rigid, fixed, one-fits-all checklist, but the exact opposite: ideally, you will have a checklist so tailored for your work and use cases that, by the time it is truly useful to you, none of my questions will remain.

(AI Checklist (pdf)

14iv26

Mark Zuckerberg is Building a Photorealistic AI Avatar of Himself to 'Engage' With Employees

Whatever Happens to Music Will Happen to AI via Stephen Downes

15iv26

What the Studies Say About How AI Affects Your Brain: A (Very Big) Compilation Alberto Romero

Between 2023 and 2026—that is, between ChatGPT changed the world forever and today—many studies from institutions including MIT, Wharton, Harvard, Stanford, Microsoft, OpenAI, Oxford, Google DeepMind, and Chinese universities have investigated what AI chatbots do to human cognition, learning, and psychology.These studies include brain scans, randomized controlled trials (RCTs) with thousands of participants, longitudinal surveys, meta-analyses, and field experiments in real classrooms and workplaces (both preprints and peer-reviewed).

But, to the best of my knowledge, no one has compiled them in one easily-readable and easily-accessible place. This is it.

...Here's the full picture as we have it so far.

Study by study, I've gathered 30+ in total that, together, reveal what science actually knows about what happens to your brain, your thinking, your learning, and your emotional life when you use AI chatbots.

And crucially, what it doesn't know yet.

The global conclusion that emerges from this compilation is a paradox that will define policy, product design, individual behavior, and how we collectively relate to this new, impressive, and scary technology

- YOUR BRAIN ACTIVITY DROPS

A small but growing number of studies have put people inside brain scanners or strapped EEG sensors to their heads while they use ChatGPT. Neuroimaging tools measure how things affect brain activity, so these are, potentially, the most "reliable" sources (compared to self-report surveys and behavioral testing)...- YOU STOP QUESTIONING OUTPUTS

There's a robust body of evidence on what researchers variously call "cognitive surrender," "automation bias," and "cognitive offloading," concepts clustered around the tendency to accept AI outputs without much or any scrutiny.- YOU LEARN LESS, YOU LEARN MORE

The education research reveals a clean split between 1) raw AI access consistently harms learning and 2) AI designed specifically for teaching can dramatically improve it. The variable is whether AI replaces the cognitive work or scaffolds it.- YOU GET LONELIER (OR DO YOU?)

The psychological research on AI chatbots and emotional well-being is the most conflicted area in the literature. I've included this here because AI affects learning and cognition, but also emotion and psychological behavior.- THE

META-ANALYSES A few meta-analyses (there are not many) have attempted to reconcile the contradictory findings, particularly on learning.

...The meta-analyses confirm the paradox we've been seeing so far at scale: The aggregate effect size for immediate performance is large and reliable, whereas the aggregate effect on the cognitive processes that produce independent capability—higher-order thinking, metacognition, self-efficacy, transfer—is small, null, or unmeasured. They measure what's easy to measure (test scores) and largely miss what's hard (whether the person is developing or atrophying).- THEORETICAL FRAMEWORKS

Several papers have proposed theoretical frameworks to explain why AI affects cognition the way it does. These are conceptual models that organize the evidence....When taking that path is frictionless and produces good-enough outputs, the effortful alternative (actual thinking) is harder to justify in the moment. They describe the same phenomenon that the empirical studies find: the gap between what AI does for the output and what it does to the person producing it.

- WHAT THE PUBLIC SAYS

The public is right to worry, but the worry is unfocused. The Pew data show that people sense AI threatens cognition and relationships, and the research in this compilation largely confirms that intuition. But the public framing is binary (AI good or AI bad) when the research points to something else: the same technology produces opposite effects depending on implementation. The gap between public perception and scientific evidence is about what variable matters most, the presence of the technology vs how it's used.- GLOBAL CONCLUSIONS

Core finding: A performance-competence dissociation as a function of implementation design, not technologyWhat we don't know

Four critical gaps/limitations remain:

- No long-term neuroimaging. The MIT EEG study tracked participants across four sessions. No study has measured brain changes from sustained AI use over months or years (the technology is perhaps too young for that; should be a priority). The “cognitive debt” finding—suppressed brain activity persisting even after AI was removed—is preliminary.

- Individual differences are poorly mapped. Who is most vulnerable to cognitive surrender? Who resists it? The Wharton study identified trust in AI as the key predictor; the Swiss study found age and education matter. But the interaction between personality, cognitive style, expertise, and AI vulnerability is largely unexplored. I've long observed that AI is an “enhancer of natural disposition,” meaning that it makes you more of what you already are, but this is purely anecdotal.

- Children are almost unstudied. I found one fMRI study of 31 children. That's it. Meanwhile, 64% of U.S. teens use AI chatbots and 30% use them daily. They are the most exposed group and the one that has its future at stake the most. The developmental neuroscience of AI use—the effects on brains that are still forming—is the most urgent gap in the entire field.

- A science vs technology time delay. Because science is slower than technological development, most of the studies are conducted with old models (e.g., GPT-4o, GPT-3.5, etc.).

Meet the Scope Creep Kraken Tim O'Brien at O'Reilly

AI didn't invent scope creep. It just removed the friction that used to stop it....Scope creep is older than AI, of course. Software teams have been haunted by "while we're at it" long before anybody was pasting stack traces into a chat window. What AI changed was the rate of growth. In the old version of this problem, extra scope still had to fight its way through staffing constraints. Somebody had to build the feature, debug it, test it, and explain why it belonged. That friction was often the only thing standing between a focused project and an over-extended team.

AI broke that.

Now the extra feature often arrives with a demo attached. "Could we add multi-language support?" Forty-five seconds later, there is a branch. "What about generated documentation?" Sure, why not? "Could the CLI accept natural language commands?" The model appears optimistic, which is enough to make the whole thing sound temporarily reasonable. Each addition looks manageable in isolation. That is how the Kraken works. It does not attack all at once. It wraps around the project one small grip at a time.

...For a while, the Kraken even looks helpful. Output goes up. Screens appear. Branches multiply. People feel productive, and sometimes they really are productive in the narrow local sense. What gets hidden in that burst of visible progress is integration cost. Every tentacle has to be tested with every other tentacle. Every generated convenience becomes a maintenance obligation. Every small addition pulls the project a little farther from the problem it originally set out to solve.

20iv26

Scenario Planning for AI and the "Jobless Future" O'Reilly

...In a scenario planning exercise, you identify two key uncertainties and draw them as crossing vectors, dividing the possibility space into four quadrants. Each quadrant describes a different future. The power of the technique is that you don't bet on one quadrant. You look for actions that make the most sense across all of them. And you're not limited to doing this for only one uncertainty. You can repeat the exercise multiple times, each time expanding your sense of possible futures and clarifying your convictions about the most robust strategies for adapting to them.For AI and jobs, the most obvious crossing vectors to model might seem to be how fast AI grows in its ability to replace human work and how quickly that capability is adopted. This is, in effect, scenario planning about whether the "AI is unprecedented" or "AI is normal technology" camp is correct. That might well be a useful pair of axes.

21iv26

Dark Factories: Rise of the Trycycle Dan Shapiro at O'Reilly

22iv26

Some Unknown Group Is Reportedly Using Claude Mythos Without Permission gizmodo

SpaceX Obtains Option to Buy Cursor for $60 Billion gizmodo

...before there was Claude Code there was Cursor, one of the original vibe coding apps, which launched in 2023. Last year, when Cursor's parent, Anysphere, raised a $105 million funding round, it was valued at $2.5 billion. By my math, SpaceX apparently thinks the Cursor product alone may be worth 24 times that amount.

Your Conversations With AI Are Now On Sale John Battelle

Data-driven performance advertising built the modern internet, warts and all. Data has become the most valuable resource in our economy, and the world's most profitable companies have all organized around enclosing, extracting, processing, refining, and exploiting this new asset class.Yesterday, OpenAI released its first performance advertising product. Marketers can now purchase "cost per click" advertising on ChatGPT, which means they can compare how money spent on OpenAI measures up to similar platforms like Google, Meta/Instagram, Apple, and Amazon, among many, many others. And if OpenAI's offerings fail to compete, the company will have no choice but to modify its products to drive better performance.

...The data we create as we pour our hopes, fears, intimacies, questions, and personal narratives into the insatiable maw of an AI chatbot is being enclosed and exploited by the very same business model that bequeathed us Facebook.

It was inevitable that OpenAI would meet the Internet at its most profitable nexus. Now that it has, the incentive structures of performance advertising will forever imprint the fabric of our interactions with AI, and by extension, our understanding of the world.